An old post describes how to install IIS on Windows 11 . After a Windows reinstall, however, you may need … More

Category: Data Mining

AI Drawings

What do you see in this image generated with a code I partially built with chatGPT?

Accessing Netflix “secret” codes

Just go to http://www.netflix.com/browse/genre/X where X is a netflix code. Netflix code lists are available all over the Web by … More

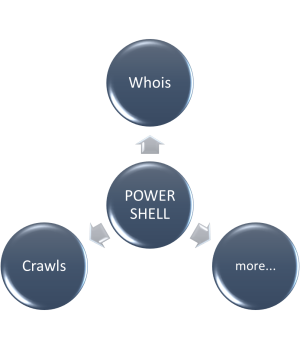

Running Whois and Crawling URIs from PowerShell

In a previous post I described the installation of Whois on Windows 11 from Microsoft SysInternals site (https://irthoughts.wordpress.com/2021/12/29/installing-whois-on-windows-11/). This time … More

The Extended Boolean Model for Information Retrieval

The Extended Boolean Model for Information Retrieval. This is an IR tutorial I wrote circa 2006 (http://www.minerazzi.com/tutorials/term-vector-6.pdf). It may be … More

Cosine Similarity and Overlapping Values

The NIH Value Set Authority Center (VSAC) has an oldie but goodie documentation on cosine similarity and overlapping values at … More

Recursive Mini Converters

Recursive Mini Converters is our newest tool. This tool is a proof of concept for the notion of recursive forms … More

Fast reverse complement computation of DNA sequences without string concatenation loops

Arguments on why string concatenation loops (SCL) is a bad programming practice were given in a previous post. You can … More

Vector Space Explorer Tool

Vector Space Explorer Tool is a new tool from Minerazzi, available now at http://www.minerazzi.com/tools/vector-space-explorer/explorer.php VSE is aimed at exploring combinations … More

Revamping the Cosine Similarity Calculator Tool

One of the most interesting problems in data mining and cluster analysis relates to the transformation of similarities into distances … More

You must be logged in to post a comment.