Repairing corrupted files in Windows computers can be done with the Command Prompt check disk tool (CHKDSK). Using Command Prompt…

Creating or undoing system restore points in Windows 11

Creating or undoing system restore points in Windows 11 To create a restore point: To undo a restore point:

Installing PHP locally on a Windows 11 Internet Information Services (IIS) Environment

This tutorial applies to installations on a local computer with Windows 11, which comes with Internet Information Services (IIS). The…

Missing IIS after reinstalling Windows 11?

An old post describes how to install IIS on Windows 11 . After a Windows reinstall, however, you may need…

SATOR Square: An unsolved puzzle?

Oldie, but goodie: The SATOR square, SATORAREPOTENETOPERAROTAS This is one the most well-known unsolved word puzzles, with a history of…

Ratio of averages or average of ratios?

Should we report a ratio of averages (RA) or an average of ratios (AR)? The answer depends on what we…

Having Fun with Eigenvalues

If A is a 3×3 matrix with eigenvalues 2, 3, 5, and B is its inverse matrix. Then: Trace of…

Artificial Intelligence, Brain Decoding, and Mind Reading. A step closer to Mind Retrieval

Artificial Intelligence and Brain Decoding (“mind reading”) https://edition.cnn.com/2023/05/23/tech/chatgpt-mind-reading/index.html We are getting closer to Mind Retrieval. Check relevant posts on this…

Unicode mnemonic for common diacritics

Back in May 9, 2019 a Unicode mnemonic for recalling the decimal notation of common diacritics was presented. A tutorial…

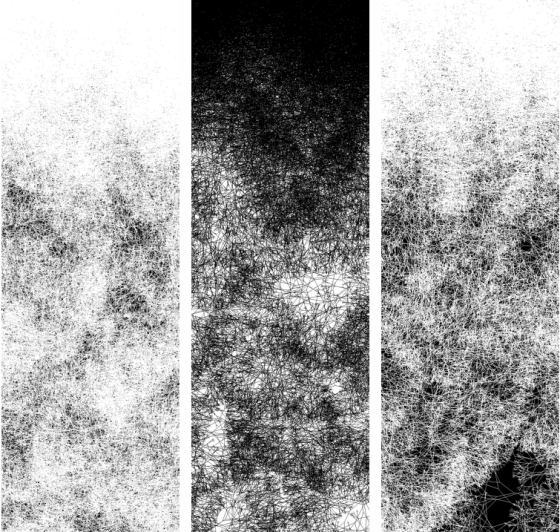

A Statistical Approach to Automated Generation of Totem-like Patterns by Drawing Straight Lines

A Statistical Approach to Automated Generation of Totem-like Patterns by Drawing Straight Lines If something is part random and part…

You must be logged in to post a comment.